The Long Way Here, Now: Beatniks, Buddhism, and the Bridge to AI

Listen while you read

On repeat while writing this.

Before I had a clean career narrative, I had questions I couldn’t stop asking: about perception, attention, and why certainty can feel so convincing. Over time, I realized I didn’t just want answers. I wanted methods: ways to test my intuitions against reality and notice when I was fooling myself.

“The first principle is that you must not fool yourself—and you are the easiest person to fool.” — Richard Feynman

Looking back, I can trace that obsession to an earlier place: the stage.

The Stage

I was a theater kid: improv, Shakespeare, rehearsals that ran too late. Playing characters made something obvious in a way nothing else had: the “self” isn’t one solid thing. It shifts with voice, body, context.

I did everything from kabuki-style Shakespeare (Macbeth with stylized movement and masks) to playing a silent yellow bird in You’re a Good Man, Charlie Brown.

”…” — Woodstock (entire script)

It sounds random, but it taught me something early: identity is more flexible than it feels from the inside. One night I’m channeling ambition and blood and prophecy; the next I’m communicating with two gestures and a head tilt. All of it coming out of the same nervous system.

That realization didn’t answer anything, but it cracked something open. It made me want to look directly at the machinery underneath the performance.

In high school I gravitated toward writers who treated reality as something you could inspect. Alan Watts gave me permission to look. Then the beatniks: Kerouac, Ginsberg, that holy-mess lineage of American seekers. What hooked me wasn’t just the road. It was the refusal to live by inheritance alone.

The Search

“When you bow, you should just bow; when you sit, you should just sit; when you eat, you should just eat.” — Shunryu Suzuki

That curiosity pulled me into Zen meditation and yoga. I read widely: Krishnamurti, Shunryu Suzuki, Ram Dass, Ramana Maharshi. Anyone pointing at the same thing from different angles: how easily the mind turns experience into story.

The practices were simple but ruthless: sit down, watch the mind, notice how quickly it narrates, clings, performs, and calls it “truth.” Krishnamurti especially pierced the veil. He didn’t let you hide behind doctrine. He asked you to look. Zen did the same, just quieter. Sit down. Shut up. See what’s there.

Eventually I decided I needed reality to push back harder. I didn’t want mindfulness that only worked in a comfortable room with incense.

So I packed my kit and left: a yoga mat, my bass guitar, and fifty-odd books. [Skills: flexibility, low-end groove, overthinking.] I traveled around Asia for two years.

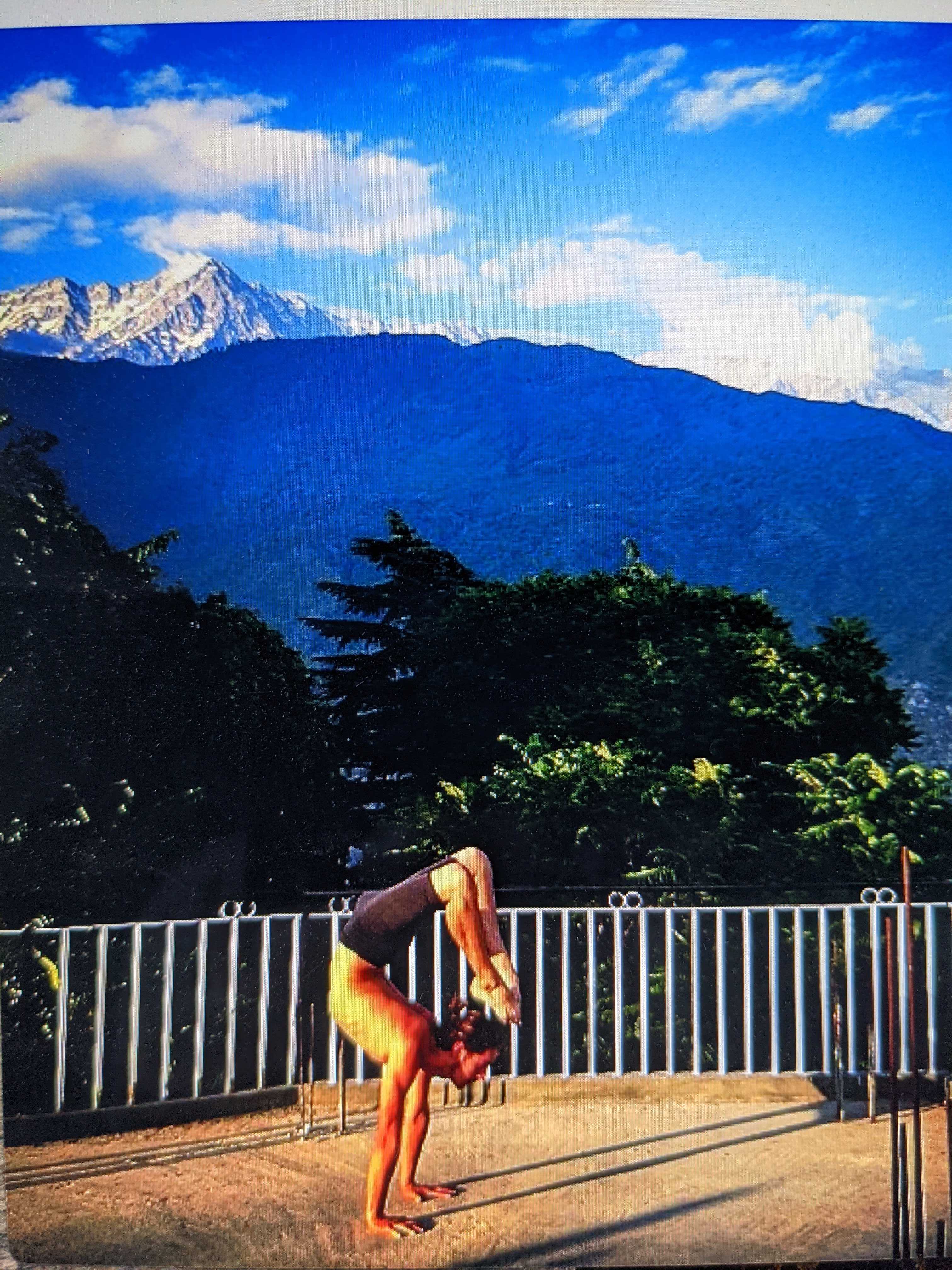

Scorpion handstand in Dharamsala, India (2012).

I sat in a monastery in Thailand. I taught English to Tibetan refugees in India. I met people whose lives had been stripped down to essentials: discipline, community, survival, meaning.

Somewhere along the way I ran into a phrase from the Indian tradition: sat-chit-ananda (truth, consciousness, bliss). I felt it most vividly in Pokhara, Nepal, near the Annapurna range. Midnight, my now-wife and I walking back to a guesthouse that cost $2.50 a night. Fireflies everywhere, wild horses grazing by the road, a full moon over the lake. The happiest moment of my life.

I don’t treat sat-chit-ananda as a metaphysical claim. I treat it as a compass: honesty about what’s real (sat), clarity about experience (chit), and stable wellbeing that isn’t dependent on constant grasping (ananda).

Travel didn’t make me “enlightened.” It made me less gullible. It showed me how easy it is to spiritualize your own confusion—and that some practices genuinely work, but only if you stop wearing them as costumes and start using them as tools.

The Method

Once you’ve seen how easily the mind performs, you start craving methods that don’t care how convincing you are.

Somewhere during those years, I realized the inner search wasn’t tangential to science. It was training for it.

So I started wanting tools that push back. That’s what science became for me: a discipline where your story doesn’t get the final vote. You make a hypothesis. You test it. You quantify uncertainty. You get humbled. You revise. You build things other people can interrogate.

For me, that discipline took the shape of models—systems you can train, break, measure, and stress-test. Computational biology and AI became the closest bridge I’ve found between inner and outer worlds: representation, meaning, and prediction made concrete.

“Well, I’m back.” — Samwise Gamgee

I came home and started over. Community college was the reset. Field ecology was the training ground. From there I transferred to Cal State San Marcos, then UC San Diego, and into a PhD at the Salk Institute.

Now I build deep learning systems for personalized regulatory genomics: variant-aware sequence-to-function modeling, rigorous benchmarking, and allele-specific methods to predict cell-type-specific effects in autoimmune disease.

I still sit Zazen and practice yoga. Not as a lifestyle claim. More as a reminder that cognition is fallible, confidence is cheap, and rigor is what keeps you honest.

Coherence Is Not Evidence

The danger isn’t that models can talk. It’s that they can talk well.

Modern AI systems are the most convincing performers we’ve ever built. They generate fluent narratives, confident explanations, and moral framing on demand—whether or not any of it is grounded. If you’ve spent years watching your own mind manufacture certainty, you develop a healthy allergy to coherence as evidence.

I’m not an AI safety researcher (my work is in regulatory genomics), but the same habits that make science honest are what draw me to safety. A model that sounds aligned isn’t necessarily aligned. A model that offers a beautiful explanation isn’t necessarily revealing what drove its output. The work I want to contribute to is the work that keeps us honest: evaluation harnesses that fail loudly under distribution shift, interpretability tools that distinguish rationalization from stable behavior, and deployment norms that treat “feels good” as the start of inquiry, not the end.

These systems shape attention, belief, and decision-making at scale. Even if today’s models aren’t conscious, they shape conscious lives. That makes safety a moral problem as much as a technical one.

The goal isn’t just capability. It’s AI that helps humanity move closer to sat (truth) without forgetting chit (consciousness) and without trampling whatever ananda (wellbeing) might still be possible in a world run at machine speed.

I’ve spent my life trying to reduce self-deception in myself. I want to do the same for AI safety research.

The books that shaped this path: Reading List

Discussion

Sign in with GitHub to comment

Loading comments...